Understanding Quasi-Experimental Designs in Healthcare Research

When True Experiments Are Not Feasible

Many important healthcare questions cannot be addressed with randomized controlled trials. Ethical constraints may prevent withholding a beneficial policy from certain communities. Logistical realities—such as evaluating a hospital-wide electronic health record implementation—make random assignment impractical. In these situations, quasi-experimental designs provide a structured way to examine cause-and-effect relationships without full experimental control.

Quasi-experiments share some features with true experiments, particularly the presence of an intervention and a comparison condition. What they lack is random assignment. Participants or groups end up in intervention or comparison conditions through natural circumstances, administrative decisions, or self-selection. This distinction is critical because it means confounding variables may not be evenly distributed, weakening causal claims.

Despite this limitation, quasi-experimental evidence is widely respected in health policy and program evaluation. When designed carefully and combined with appropriate statistical adjustments, these studies can offer compelling evidence that informs real-world practice. Students should view them not as inferior substitutes for RCTs but as legitimate tools suited to specific research contexts.

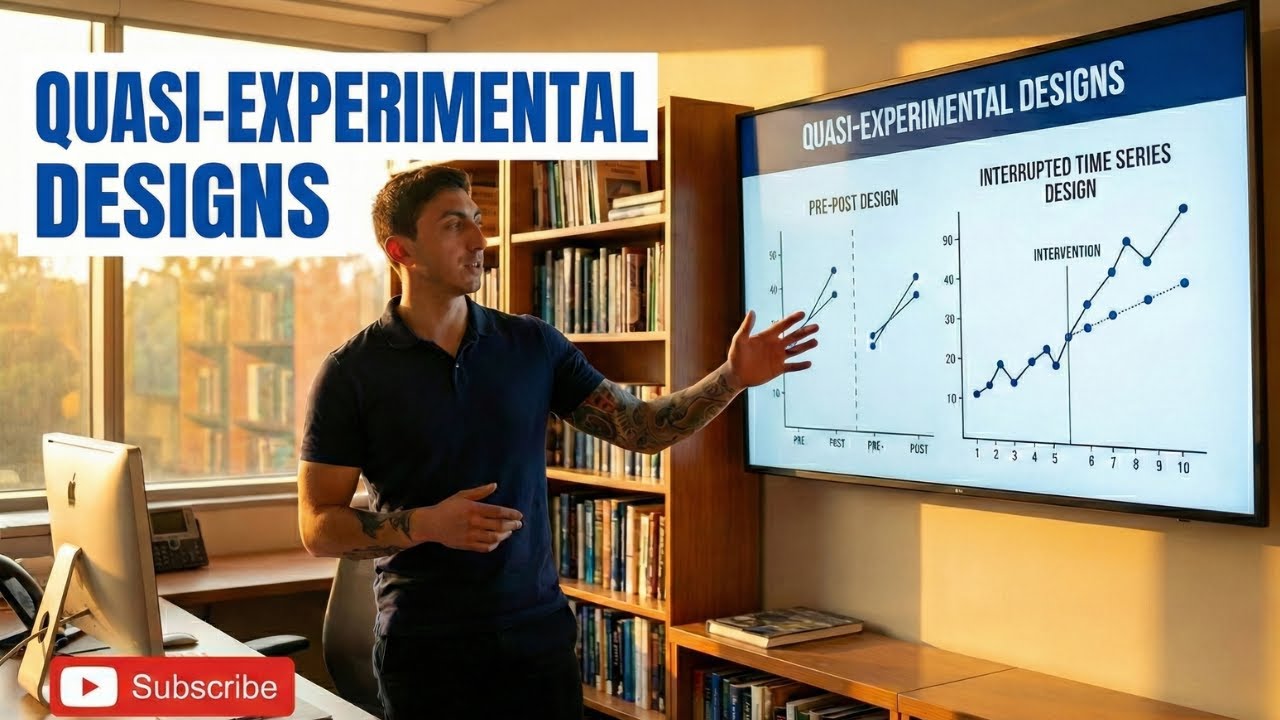

Pre-Post Designs and Their Variations

The simplest quasi-experimental approach measures outcomes before and after an intervention is introduced, then examines whether a meaningful change occurred. A hospital might track hand-hygiene compliance rates for three months, implement a new training program, and then measure compliance for another three months. Any improvement is tentatively attributed to the program.

The weakness of a basic one-group pre-post design is obvious: other events occurring between the two measurement periods could explain the change. Seasonal illness patterns, staff turnover, or simultaneous policy changes might be responsible rather than the intervention itself. These alternative explanations are called threats to internal validity, and they are the central challenge of quasi-experimental research.

Adding a non-equivalent comparison group strengthens the design considerably. If a similar hospital that did not implement the training shows no change during the same period, the case for attributing improvement to the intervention becomes more convincing. While still not as strong as randomization, this approach helps rule out many competing explanations and is frequently used in healthcare program evaluations across facilities and health systems.

Interrupted Time Series Analysis

Interrupted time series designs collect multiple data points before and after an intervention, creating a trend line that reveals whether the intervention altered the trajectory of an outcome. Rather than comparing just two snapshots—pre and post—this approach examines the pattern over many time points, making it far easier to detect whether a genuine shift occurred.

For example, a researcher studying the impact of a new prescribing guideline might gather monthly opioid prescription rates for two years before the guideline was issued and two years after. If the post-intervention trend shows a clear level change or slope change relative to the pre-intervention trend, the evidence for an intervention effect is strong.

Interrupted time series designs are particularly valuable for policy evaluations because policies typically affect entire populations simultaneously, ruling out randomization. The design's reliance on multiple observations also makes it robust against one-time fluctuations that could mislead a simple pre-post comparison. Students should note, however, that co-occurring events—such as a national awareness campaign launched around the same time—remain a threat and must be addressed through design features or sensitivity analyses.

Strengthening Causal Claims Without Randomization

Because quasi-experimental designs lack randomization, researchers employ several strategies to bolster confidence in their causal conclusions. One approach is to include multiple comparison groups or sites, making it less likely that observed effects are due to unique local circumstances. Another is to collect extensive baseline data that allows statistical adjustment for pre-existing differences between groups.

Sensitivity analyses play an important role as well. Researchers test how robust their findings are under different assumptions—for instance, asking how large an unmeasured confounder would need to be to erase the observed effect. If the effect survives plausible alternative scenarios, stakeholders can be more confident in the conclusions.

Transparent reporting is equally vital. Readers need to understand exactly how groups were formed, what covariates were controlled, and which threats to validity remain unaddressed. Guidelines such as the TREND statement provide a structured checklist for reporting quasi-experimental studies, parallel to the CONSORT statement used for RCTs. Students who learn to apply these strategies will produce quasi-experimental research that earns credibility in peer review and policy discussions.

Related topics from other weeks:

Frequently Asked Questions

What makes a study quasi-experimental rather than truly experimental?

A quasi-experiment lacks random assignment of participants to groups. The intervention is still deliberately introduced, but participants end up in conditions through natural or administrative processes rather than a chance mechanism.

What is the biggest threat to a simple pre-post study?

History—meaning external events that occur between the pre and post measurements—is the primary threat. Without a control group, any concurrent change in the environment could be mistaken for an intervention effect.

How does an interrupted time series improve upon a basic pre-post design?

By collecting many data points before and after the intervention, an interrupted time series establishes a trend line that reveals whether the intervention changed the level or slope of the outcome. This makes it easier to distinguish a true effect from random variation.

Can quasi-experimental studies be published in top-tier journals?

Yes, well-designed quasi-experimental studies are published frequently in leading healthcare and public health journals. Transparent reporting, appropriate statistical adjustments, and acknowledgment of limitations are key to acceptance.

What is the TREND statement?

The TREND statement is a reporting guideline for non-randomized evaluations of behavioral and public health interventions. It provides a checklist of items to include in a manuscript, similar to how CONSORT guides the reporting of randomized trials.

Explore more study tools and resources at subthesis.com.